I’m working on a data set with AMR for audio. AMR = Average Minute Rating, in essence how many listeners you content has had on average, each minute. You can think of it as a measure of your audience being spread out evenly over the content, from start to beginning.

To be able to calculate your AMR you need to know the total amount of minutes people have listened to your content and then of course the length of the content. So if your audio content is ten minutes long and your analytics tells you that you have a total of 43 minutes of listening, that would give you an AMR of 4.3 (=on average 4.3 persons listened to the content for the entire duration of it).

My assupmtion is, at least when it comes to well established audio content, like pods running for tens of episodes, that the AMR is more or less the same for each episode. Or at least within the same ball park.

However, at times your data might contain odd numbers. Way to small or way too big numbers. So are these outliers or should you believe that there actually was that few/many listeners at that particular time? Well, there’s no easy answer to that. You need to do some exploratory analysis and have a thorough look at your data.

First, especially if you run into this kind of data often, I would establish some kind of rule-of-thumb as to what is a normal variation in AMR in your case. For some content variation might be small, and thus even smaller deviations from the “normal” should be singled out for further analysis. In other cases the AMR varies a lot, and then you should be more tolerant.

Then, after identifying the potential outliers, you need to start playing detective. Can you find any explanation as to why the AMR is exceptionnally high or low? What date did you publish the content? Was it holidays when your audience had more time than usual to listen to the content or did some special event occur that day, that drew people away from it? Again, there is no one rule to apply, you need to judge for yourself.

Another thing to consider is the content: Was the topic especially interesting/boring? Did you have a celebrity as a guest on your pod/did you not have one (if you usually do)? Was the episode much longer/shorter than normally? Was it published within the same cycle, like day of week/month as you usually do? Did you have technical difficulties recording that affects the quality? And so on, and so son…

It all boils down to knowing your content, examining it from as many different perspectives as possible, and then make a qualified judgement as to whether or not the AMR is to be considered an outlier or not. Only then can you decide which values to filter out and which not.

When you are done with this, you can finally start the analysis of the data. As always, cleaning the data takes 80% of your time and the actual analysis 20% – or was it 90%-10…?

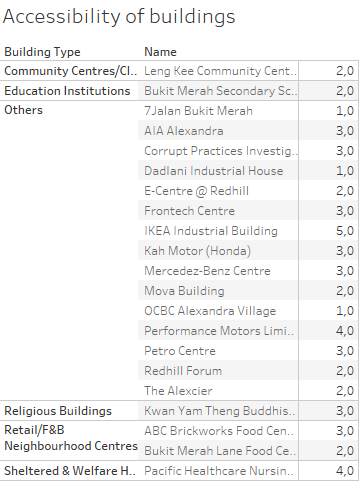

P.S. Sometimes it helps to visualise – but not always: